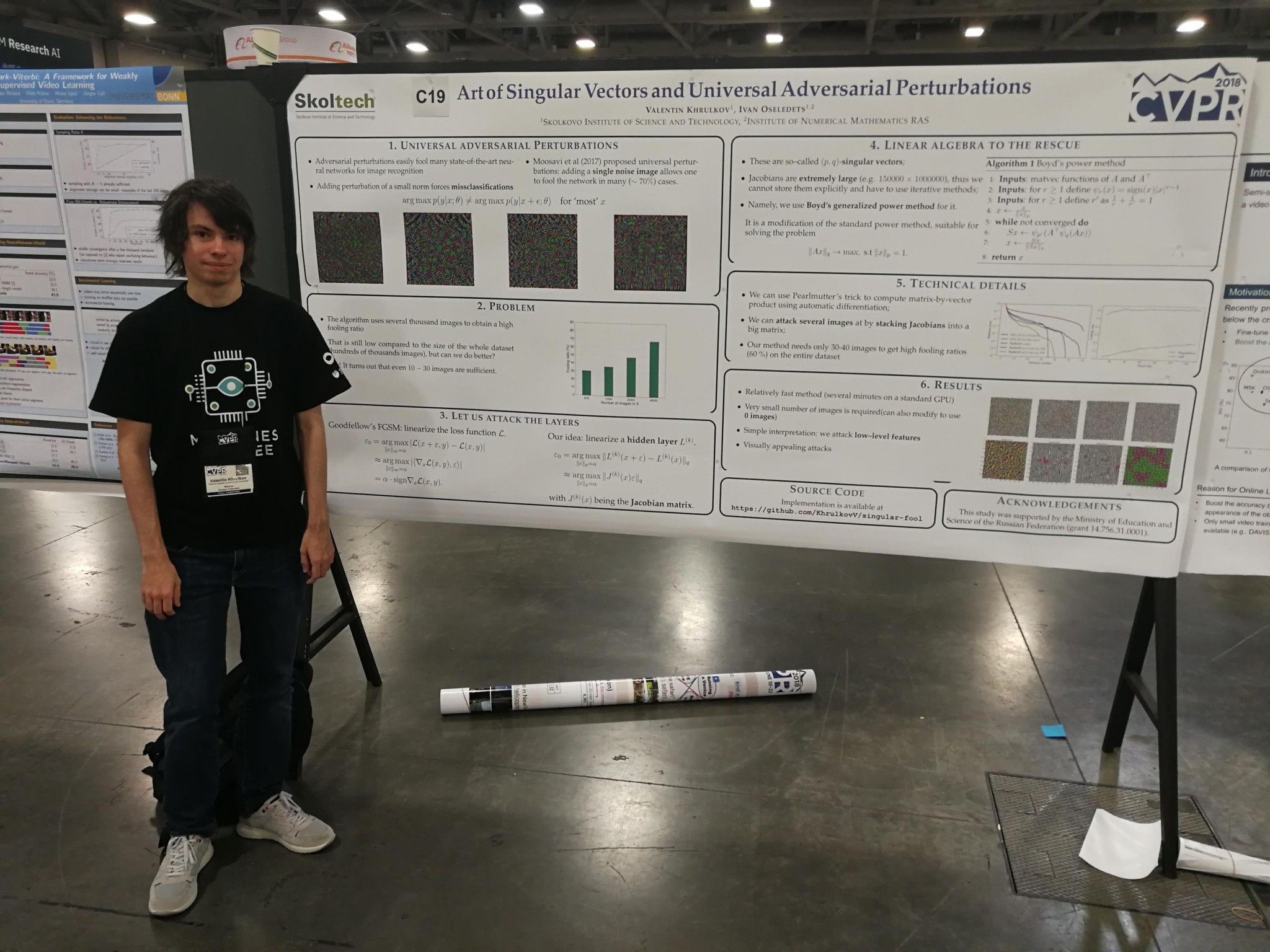

Skoltech PhD student Valentin Khrulkov was invited to deliver a spotlight presentation of his latest research on universal adversarial perturbations at the Conference on Computer Vision and Pattern Recognition (CVPR), which is broadly regarded to be the top computer vision conference in the world.

Khrulkov and his advisor, Professor Ivan Oseledets of the Skoltech Center for Computational Data-Intensive Science and Engineering (CDISE), were flown out to Salt Lake City, Utah to attend the event and present their research before scores of computer vision researchers from around the world.

This is particularly notable in light of the fact that only about 6.6 percent of papers submitted to the prestigious conference are accepted.

Khrulkov explained that perturbations can be crafted in order to launch attacks on deep neural networks, thereby causing them to misinterpret images. A practical consequence of this can be found in facial recognition software; a perturbation can be designed to cause the neural networks underpinning such software to misclassify an individual’s face.

Oseledets likewise noted that universal adversarial examples are of critical importance due to their capacity to fool different networks. “For example, they demonstrate that the use of automatic deep neural network approaches can be subject to attacks; that is, people should be cautious when using machine learning in large-scale deployment, due, for example, to malicious content in online videos.”

Describing the project, Khrulkov said: “In this work, we focused on the so-called universal perturbations, which means that you do not design such an adversarial attack individually for each image, but rather use the same attack in all the cases. Surprisingly, such perturbations exist and are very effective for many popular networks. We came up with a new approach for constructing such attacks which requires knowing very little about the data used for training the network.” By comparison, he noted, previous approaches required several thousand images.

“Our approach also has a nice interpretation of why this attack is effective: it is common knowledge that in order to recognize an object on an image, deep neural networks in way summarize information about the images on various levels. At low levels, it focuses on edges, boundaries, and so on. At higher levels, it focuses on patterns and so on. Our attack ruins this very low-level image understanding by introducing a lot of edge-like noise in the image, which in some sense acts like a beam of light blinding the network,” Khrulkov said.

Oseledets added: “We’ve provided a fast and efficient method to construct such an example by using tools from linear algebra.”

Asked what he believed set this project apart from the 93.4 percent of papers that were rejected from the conference, Oseledets said: “Our idea is fast and efficient, and has a very simple and elegant design. By comparison, previous approaches were much more complicated.”

Khrulkov agreed, stating: “I believe that the main reason why our submission was chosen is the simplicity and yet effectiveness of our idea, which allowed us to obtain stronger results than in previous work on this subject, while also being very instructive and interpretable.”

According to CVPR’s website, the aim of spotlight presentations is to provide promising authors with the opportunity to present their findings before a large audience.

Oseledets and his team also had two papers accepted by the illustrious International Conference on Machine Learning, which is being held this week in Stockholm.

Contact information:

Skoltech Communications

+7 (495) 280 14 81